Alisson Enz

Founder & CEO

Go look at your best engineer's Git history from 2024. Now look at their history from this year. The volume of quality code they're shipping has increased dramatically, and it has nothing to do with working longer hours.

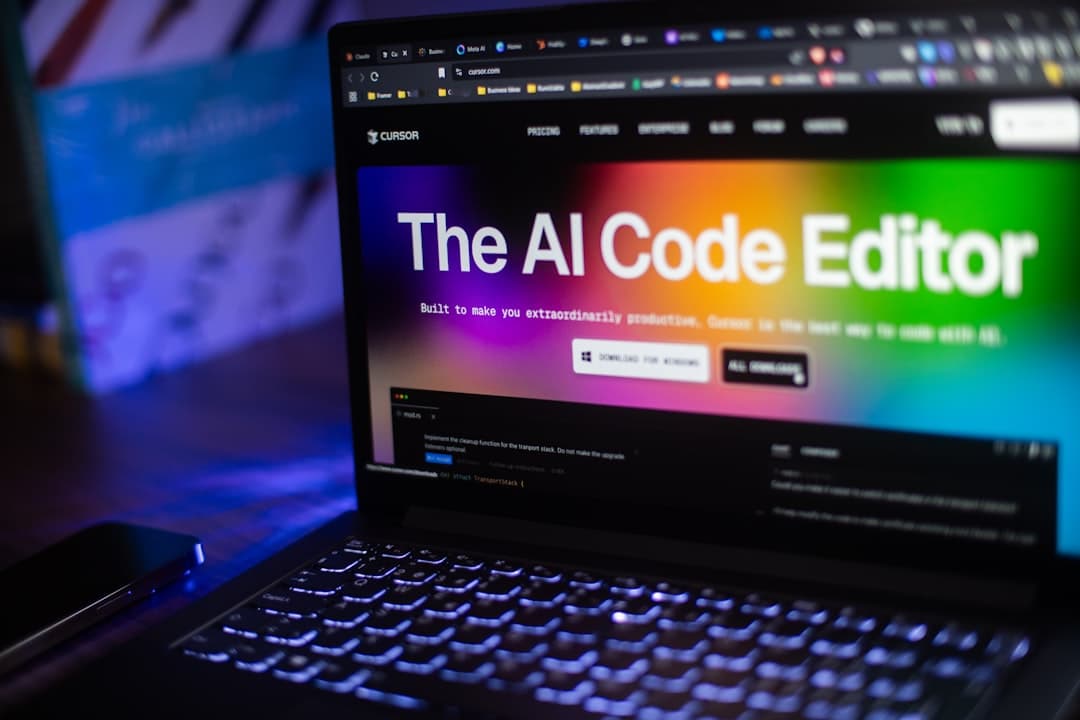

They're using GitHub Copilot for boilerplate. They're using Claude or ChatGPT to draft architectural approaches before writing a single line. They're generating test scaffolding in seconds instead of spending thirty minutes on setup. They're debugging faster because they can describe the problem in natural language and get relevant suggestions.

This shift already happened. It happened quietly. Most engineering managers noticed the output increase but attributed it to other things: better processes, team maturity, improved tooling. But a big chunk of that increase comes from AI-assisted development.

GitHub's data shows that developers using Copilot complete tasks roughly 55% faster. Our internal data from placing engineers tells a similar story. Engineers who use AI tools effectively have shorter cycle times, write more comprehensive tests, and produce better documentation.

This isn't because AI writes perfect code. It doesn't. The productivity gain comes from eliminating low-value work: boilerplate, repetitive patterns, initial test structure, documentation drafts. When a developer doesn't spend twenty minutes writing a CRUD endpoint they've written a hundred times before, they spend that time on architecture decisions, edge cases, and code review.

Two years ago, "productive developer" meant someone who could write code quickly and correctly from memory. Today, "productive developer" means someone who knows when to write code themselves and when to use AI assistance, and can judge the quality of AI-generated output.

That second skill is new and it matters a lot. AI tools generate plausible code that's often subtly wrong. A developer who blindly accepts AI output introduces bugs. A developer who reviews AI output critically, keeps the good parts, fixes the bad parts, and understands why the AI got certain things wrong: that developer is genuinely faster.

The shift is from typing speed to judgment speed. The bottleneck moved from "how fast can you write code" to "how fast can you evaluate approaches and make good decisions."

Here's the problem: most hiring processes still evaluate the old skill set.

Timed coding challenges measure raw coding speed. Whiteboard interviews measure whether someone can write a sorting algorithm from memory. Take-home projects evaluate whether someone can build a feature in isolation.

None of these tell you whether a candidate uses AI tools effectively. None of them measure judgment, which is now the primary determinant of productivity.

Worse: some hiring processes explicitly ban AI tool usage during coding tests. This makes the test measure a skill the candidate will never use on the job. It's like hiring a writer and banning them from using a spell checker during the interview.

We've updated our evaluation process to account for the AI-assisted reality. Here's what we look for:

Tool awareness. Does the candidate know what tools exist and what they're good at? Someone who says "I use Copilot for everything" is less sophisticated than someone who says "I use Copilot for implementation, Claude for architecture planning, and I avoid AI for security-critical code."

Output judgment. Can they evaluate AI-generated code critically? We show candidates a piece of AI-generated code with a subtle bug and ask them to review it. The speed and accuracy of their review tells us a lot about their judgment.

Workflow integration. How do they combine AI tools with traditional development practices? The best developers use AI to accelerate their existing workflow, not replace it. They still think about architecture. They still write tests. They still do thorough code reviews. They just do all of it faster.

Here are questions we've found useful:

The last question is especially revealing. Engineers with good judgment have clear boundaries. Engineers without good judgment use AI indiscriminately or not at all.

If your hiring process doesn't account for AI-assisted development, you're evaluating candidates on a skill set that's already outdated. You'll hire people who are technically competent but operationally slower than they should be.

The developers who thrive in 2026 are the ones who treat AI as a multiplier, not a replacement. They understand their tools deeply enough to know when AI helps and when it hurts. They ship faster because they spend less time on work that doesn't require human judgment.

Your hiring process should find those people. If it still measures raw coding speed and bans AI tools during interviews, it won't.

Alisson Enz

Founder & CEO

Founder and CEO of EnzRossi. After years working with tech, I started EnzRossi. Here I write about hiring, remote teams, and what actually makes a developer great.

Need engineers?

Book a free 30-minute call and we'll map the right roles, stack, and timeline for your team.